- Sheets interchange “r” and “x” for number of successes Chapter 5 Discrete Probability Distributions: 22 Mean of a discrete probability distribution: ( ) Standard deviation of a probability distribution: ( ) x Px x Px µ σµ =∑. =∑. − Binomial Distributions number of successes (or x) probability of success =.

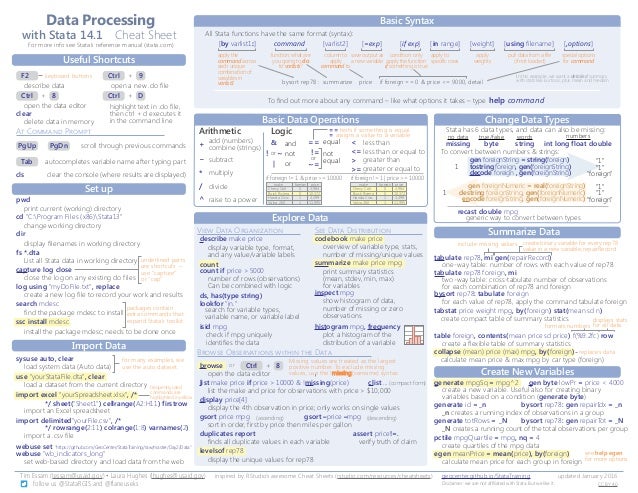

- DRAFT: Basic statistics with R Cheat Sheet.

- Essential Statistics with R: Cheat Sheet Important libraries to load If you don’t have a particular package installed already: install.packages(Tmisc).

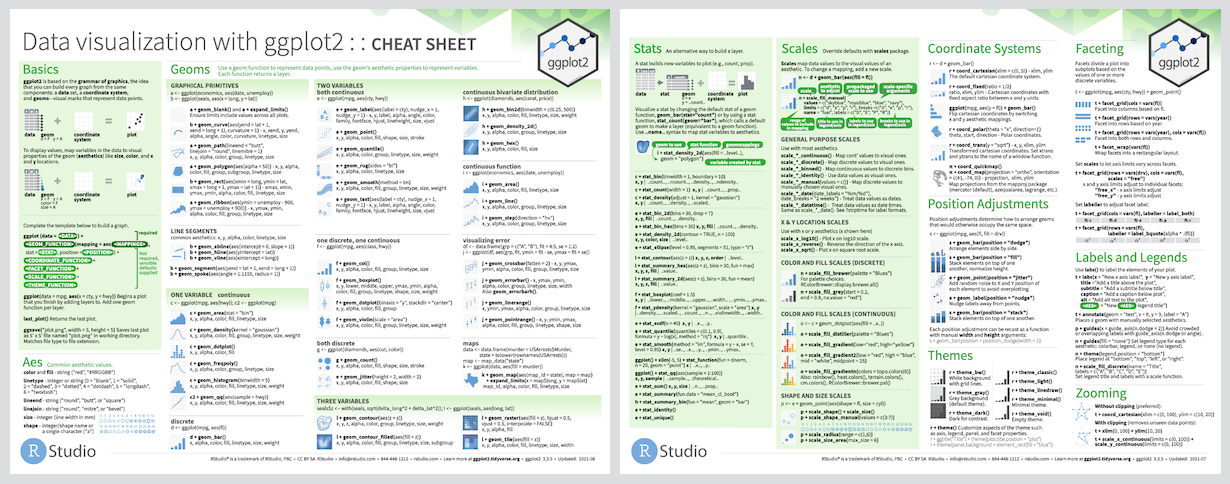

R has 657 built in color names To see a list of names: colors These colors are displayed on P. R color cheatsheet Finding a good color scheme for presenting data can be challenging. This color cheatsheet will help! R uses hexadecimal to represent colors Hexadecimal is a base-16 number system used to describe color. R Style Guide - This resource is more than a cheat sheet. Google's internal R user community put together this guide for clean R code that covers syntax & conventions that are unique to R. I include it here because I've refered to it quite a bit in my own work. Your code will be easy to read & maintain if you follow these guidelines.

By Afshine Amidi and Shervine Amidi

Parameter estimation

Definitions

Random sample A random sample is a collection of $n$ random variables $X_1, ..., X_n$ that are independent and identically distributed with $X$.

Estimator An estimator is a function of the data that is used to infer the value of an unknown parameter in a statistical model.

Bias The bias of an estimator $hat{theta}$ is defined as being the difference between the expected value of the distribution of $hat{theta}$ and the true value, i.e.:

Remark: an estimator is said to be unbiased when we have $E[hat{theta}]=theta$.

Estimating the mean

Sample mean The sample mean of a random sample is used to estimate the true mean $mu$ of a distribution, is often noted $overline{X}$ and is defined as follows:

Remark: the sample mean is unbiased, i.e $E[overline{X}]=mu$.

Characteristic function for sample mean The characteristic function for a sample mean is noted $psi_{overline{X}}$ and is such that:

Central Limit Theorem Let us have a random sample $X_1, ..., X_n$ following a given distribution with mean $mu$ and variance $sigma^2$, then we have:

Estimating the variance

Sample variance The sample variance of a random sample is used to estimate the true variance $sigma^2$ of a distribution, is often noted $s^2$ or $hat{sigma}^2$ and is defined as follows:

Remark: the sample variance is unbiased, i.e $E[s^2]=sigma^2$.

Chi-Squared relation with sample variance Let $s^2$ be the sample variance of a random sample. We have:

Confidence intervals

Definitions

Confidence level A confidence interval with confidence level $1-alpha$ is such that $1-alpha$ of the time, the true value is contained in the confidence interval.

Confidence interval A confidence interval $CI_{1-alpha}$ with confidence level $1-alpha$ of a true parameter $theta$ is such that:

With the notation of the example above, a possible $1-alpha$ confidence interval for $theta$ is given by $CI_{1-alpha} = [x_1, x_2]$.

Confidence interval for the mean

When determining a confidence interval for the mean $mu$, different test statistics have to be computed depending on which case we are in. The table below sums it up.

| Distribution of $X_i$ | Sample size $n$ | Variance $sigma^2$ | Statistic | $small 1-alpha$ confidence interval |

| $X_isimmathcal{N}(mu, sigma)$ | any | known | $displaystylefrac{overline{X}-mu}{frac{sigma}{sqrt{n}}}simmathcal{N}(0,1)$ | $left[overline{X}-z_{frac{alpha}{2}}frac{sigma}{sqrt{n}},overline{X}+z_{frac{alpha}{2}}frac{sigma}{sqrt{n}}right]$ |

| $X_isim$ any distribution | large | known | $displaystylefrac{overline{X}-mu}{frac{sigma}{sqrt{n}}}simmathcal{N}(0,1)$ | $left[overline{X}-z_{frac{alpha}{2}}frac{sigma}{sqrt{n}},overline{X}+z_{frac{alpha}{2}}frac{sigma}{sqrt{n}}right]$ |

| $X_isim$ any distribution | large | unknown | $displaystylefrac{overline{X}-mu}{frac{s}{sqrt{n}}}simmathcal{N}(0,1)$ | $left[overline{X}-z_{frac{alpha}{2}}frac{s}{sqrt{n}},overline{X}+z_{frac{alpha}{2}}frac{s}{sqrt{n}}right]$ |

| $X_isimmathcal{N}(mu, sigma)$ | small | unknown | $displaystylefrac{overline{X}-mu}{frac{s}{sqrt{n}}}sim t_{n-1}$ | $left[overline{X}-t_{frac{alpha}{2}}frac{s}{sqrt{n}},overline{X}+t_{frac{alpha}{2}}frac{s}{sqrt{n}}right]$ |

| $X_isim$ any distribution | small | known or unknown | Go home! | Go home! |

Note: a step by step guide to estimate the mean, in the case when the variance in known, is detailed here.

Confidence interval for the variance

The single-line table below sums up the test statistic to compute when determining the confidence interval for the variance.

| Distribution of $X_i$ | Sample size $n$ | Mean $mu$ | Statistic | $small 1-alpha$ confidence interval |

| $X_isimmathcal{N}(mu,sigma)$ | any | known or unknown | $displaystylefrac{s^2(n-1)}{sigma^2}simchi_{n-1}^2$ | $left[frac{s^2(n-1)}{chi_2^2},frac{s^2(n-1)}{chi_1^2}right]$ |

Note: a step by step guide to estimate the variance is detailed here.

Hypothesis testing

General definitions

Type I error In a hypothesis test, the type I error, often noted $alpha$ and also called 'false alarm' or significance level, is the probability of rejecting the null hypothesis while the null hypothesis is true. If we note $T$ the test statistic and $R$ the rejection region, then we have:

Type II error In a hypothesis test, the type II error, often noted $beta$ and also called 'missed alarm', is the probability of not rejecting the null hypothesis while the null hypothesis is not true. If we note $T$ the test statistic and $R$ the rejection region, then we have:

p-value In a hypothesis test, the $p$-value is the probability under the null hypothesis of having a test statistic $T$ at least as extreme as the one that we observed $T_0$. We have:

Remark: the example below illustrates the case of a right-sided $p$-value.

Non-parametric test A non-parametric test is a test where we do not have any underlying assumption regarding the distribution of the sample.

Testing for the difference in two means

The table below sums up the test statistic to compute when performing a hypothesis test where the null hypothesis is:

| Distribution of $X_i, Y_i$ | Sample size $n_X, n_Y$ | Variance $sigma_X^2, sigma_Y^2$ | Test statistic under $H_0$ |

| Normal | any | known | $displaystylefrac{(overline{X}-overline{Y})-delta}{sqrt{frac{sigma_X^2}{n_X}+frac{sigma_Y^2}{n_Y}}}underset{H_0}{sim}mathcal{N}(0,1)$ |

| Normal | large | unknown | $displaystylefrac{(overline{X}-overline{Y})-delta}{sqrt{frac{s_X^2}{n_X}+frac{s_Y^2}{n_Y}}}underset{H_0}{sim}mathcal{N}(0,1)$ |

| Normal | small | unknown with $sigma_X=sigma_Y$ | $displaystylefrac{(overline{X}-overline{Y})-delta}{ssqrt{frac{1}{n_X}+frac{1}{n_Y}}}underset{H_0}{sim}t_{n_X+n_Y-2}$ |

Testing for the mean of a paired sample

We suppose here that $X_i$ and $Y_i$ are pairwise dependent. By noting $D_i=X_i-Y_i$, the one-line table below sums up the test statistic to compute when performing a hypothesis test where the null hypothesis is:

| Distribution of $X_i, Y_i$ | Sample size $n=n_X=n_Y$ | Variance $sigma_X^2, sigma_Y^2$ | Test statistic under $H_0$ |

| Normal, paired | any | unknown | $displaystylefrac{overline{D}-delta}{frac{s_D}{sqrt{n}}}underset{H_0}{sim}t_{n-1}$ |

Testing for the median

Median of a distribution We define the median $m$ of a distribution as follows:

Sign test The sign test is a non-parametric test used to determine whether the median of a sample is equal to the hypothesized median.

By noting $Vunderset{H_0}{sim}mathcal{B}(n,p=frac{1}{2})$ the number of samples falling to the right of the hypothesized median, we have:

$―$ If $npgeqslant5$, we use the following test statistic:

$―$ If $np < 5$, we use the following fact:

$chi^2$ test

Goodness of fit test Let us have $k$ bins where in each of them, we observe $Y_i$ number of samples. Our null hypothesis is that $Y_i$ follows a binomial distribution with probability of success being $p_i$ for each bin.

We want to test whether modelling the problem as described above is reasonable given the data that we have. In order to do this, we perform a hypothesis test:

$chi^2$ statistic for goodness of fit In order to perform the goodness of fit test, we need to compute a test statistic that we can compare to a reference distribution. By noting $k$ the number of bins, $n$ the total number of samples, if we have $np_igeqslant5$, the test statistic $T$ defined below will enable us to perform the hypothesis test:

Trends test

Number of transpositions In a given sequence, we define the number of transpositions, noted $T$, as the number of times that a larger number precedes a smaller one.

Example: the sequence ${1,5,4,3}$ has $T=3$ transpositions because $5>4, 5>3$ and $4>3$

Test for arbitrary trends Given a sequence, the test for arbitrary trends is a non-parametric test, whose aim is to determine whether the data suggest the presence of an increasing trend:

If we note $x$ the number of transpositions in the sequence, the $p$-value is computed as:

Remark: the test for a decreasing trend of a given sequence is equivalent to a test for an increasing trend of the inversed sequence.

Regression analysis

In the following section, we will note $(x_1, Y_1), ..., (x_n, Y_n)$ a collection of $n$ data points.

Simple linear model Let $X$ be a deterministic variable and $Y$ a dependent random variable. In the context of a simple linear model, we assume that $Y$ is linked to $X$ via the regression coefficients $alpha, beta$ and a random variable $esimmathcal{N}(0,sigma)$, where $e$ is referred as the error. We have:

Regression estimation When estimating the regression coefficients $alpha, beta$ by $A, B$, we obtain predicted values $hat{Y}_i$ as follows:

Sum of squared errors By keeping the same notations, we define the sum of squared errors, also known as SSE, as follows:

Method of least-squares The least-squares method is used to find estimates $A,B$ of the regression coefficients $alpha,beta$ by minimizing the SSE. In other words, we have:

Notations Given $n$ data points $(x_i, Y_i)$, we define $S_{XY},S_{XX}$ and $S_{YY}$ as follows:

Least-squares estimates When estimating the coefficients $alpha, beta$ with the least-squares method, we obtain the estimates $A, B$ defined as follows:

Sum of squared errors revisited The sum of squared errors defined above can also be written in terms of $S_{YY}$, $S_{XY}$ and $B$ as follows:

Key results

When $sigma$ is unknown, this parameter is estimated by the unbiased estimator $s^2$ defined as follows:

The estimator $s^2$ has the following property:

The table below sums up the properties surronding the least-squares estimates $A, B$ when $sigma$ is known or not:

| Coefficient | Estimate | $sigma$ | Statistic | $1-alpha$ confidence interval |

| $alpha$ | $A$ | known | $frac{A-alpha}{sigmasqrt{frac{1}{n}+frac{overline{X}^2}{S_{XX}}}}simmathcal{N}(0,1)$ | $left[A-z_{frac{alpha}{2}}sigmasqrt{frac{1}{n}+frac{overline{X}^2}{S_{XX}}},A+z_{frac{alpha}{2}}sigmasqrt{frac{1}{n}+frac{overline{X}^2}{S_{XX}}}right]$ |

| $beta$ | $B$ | known | $frac{B-beta}{frac{sigma}{sqrt{S_{XX}}}}simmathcal{N}(0,1)$ | $left[B-z_{frac{alpha}{2}}frac{sigma}{sqrt{S_{XX}}},B+z_{frac{alpha}{2}}frac{sigma}{sqrt{S_{XX}}}right]$ |

| $alpha$ | $A$ | unknown | $frac{A-alpha}{ssqrt{frac{1}{n}+frac{overline{X}^2}{S_{XX}}}}sim t_{n-2}$ | $left[A-t_{frac{alpha}{2}}ssqrt{frac{1}{n}+frac{overline{X}^2}{S_{XX}}},A+t_{frac{alpha}{2}}ssqrt{frac{1}{n}+frac{overline{X}^2}{S_{XX}}}right]$ |

| $beta$ | $B$ | unknown | $frac{B-beta}{frac{s}{sqrt{S_{XX}}}}sim t_{n-2}$ | $left[B-t_{frac{alpha}{2}}frac{s}{sqrt{S_{XX}}},B+t_{frac{alpha}{2}}frac{s}{sqrt{S_{XX}}}right]$ |

Correlation analysis

Correlation coefficient The correlation coefficient of two random variables $X$ and $Y$ is noted $rho$ and is defined as follows:

Sample correlation coefficient The correlation coefficient is in practice estimated by the sample correlation coefficient, often noted $r$ or $hat{rho}$, which is defined as:

Testing for correlation In order to perform a hypothesis test with $H_0$ being that there is no correlation between $X$ and $Y$, we use the following statistic:

Fisher transformation The Fisher transformation is often used to build confidence intervals for correlation. It is noted $V$ and defined as follows:

R Statistics Cheat Sheet Pdf

By noting $V_1=V-frac{z_{frac{alpha}{2}}}{sqrt{n-3}}$ and $V_2=V+frac{z_{frac{alpha}{2}}}{sqrt{n-3}}$, the table below sums up the key results surrounding the correlation coefficient estimate:

R Statistics Cheat Sheet Pdf

| Sample size | Standardized statistic | $1-alpha$ confidence interval for $rho$ |

| large | $displaystylefrac{V-frac{1}{2}lnleft(frac{1+rho}{1-rho}right)}{frac{1}{sqrt{n-3}}}underset{ngg1}{sim}mathcal{N}(0,1)$ | $displaystyleleft[frac{e^{2V_1}-1}{e^{2V_1}+1},frac{e^{2V_2}-1}{e^{2V_2}+1}right]$ |